Why PySpark append and overwrite write operations are safer in Delta Lake than Parquet tables | Delta Lake

how to read from HDFS multiple parquet files with spark.index.create .mode(" overwrite").indexBy($"cellid").parquet · Issue #95 · lightcopy/parquet-index · GitHub

Idempotent file generation in Apache Spark SQL on waitingforcode.com - articles about Apache Spark SQL

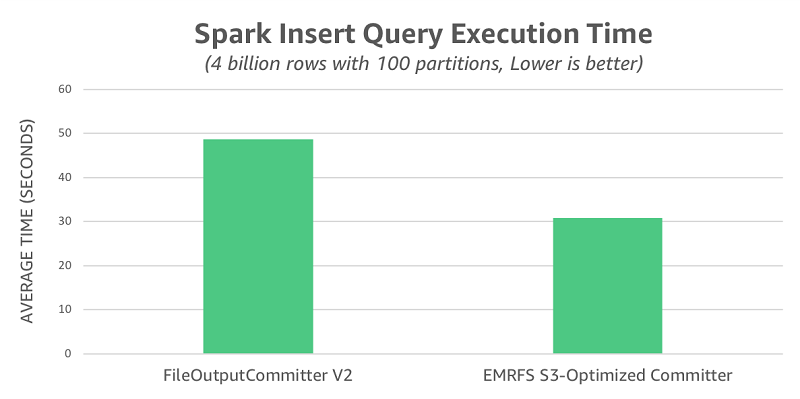

Improve Apache Spark write performance on Apache Parquet formats with the EMRFS S3-optimized committer | AWS Big Data Blog

apache spark - How to confirm if insertInto is leveraging dynamic partition overwrite? - Stack Overflow

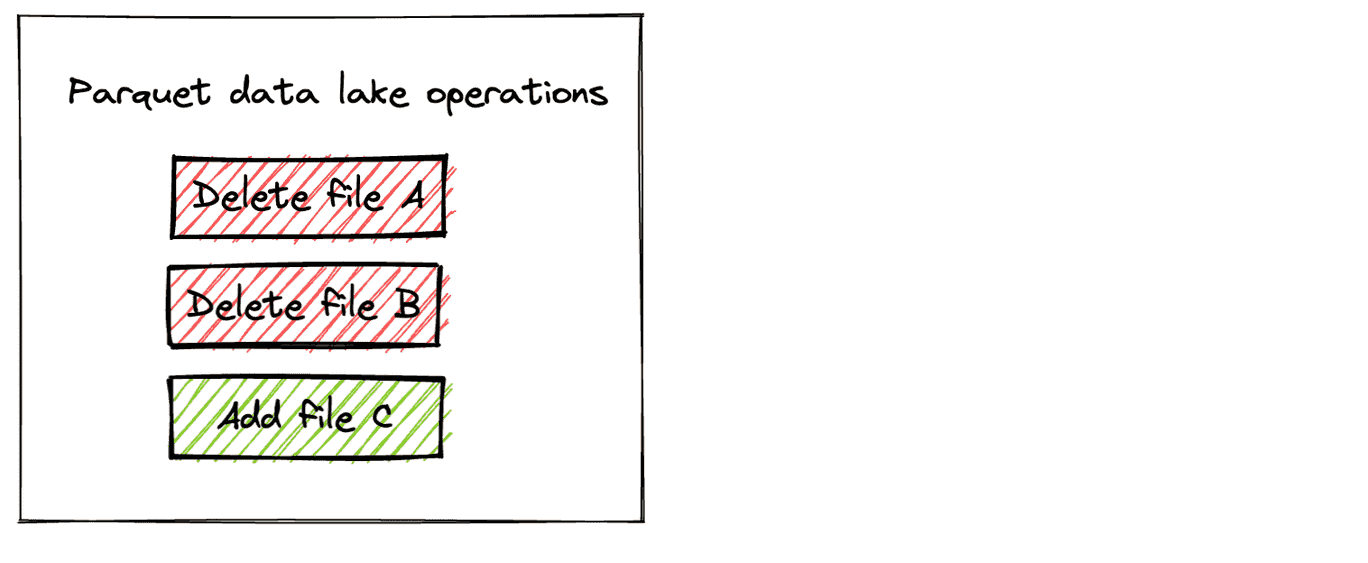

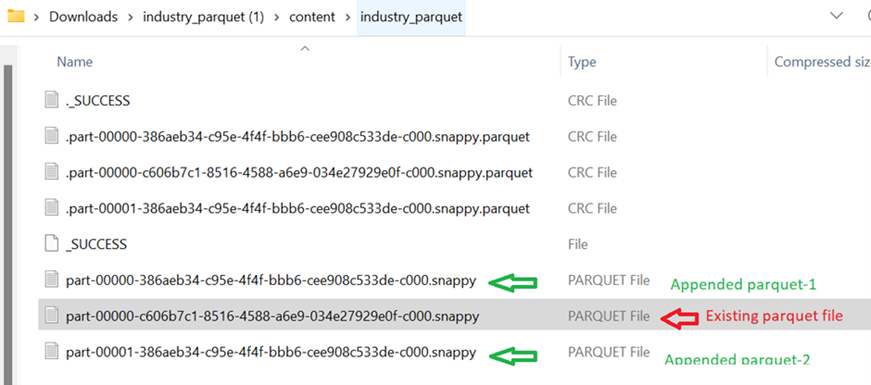

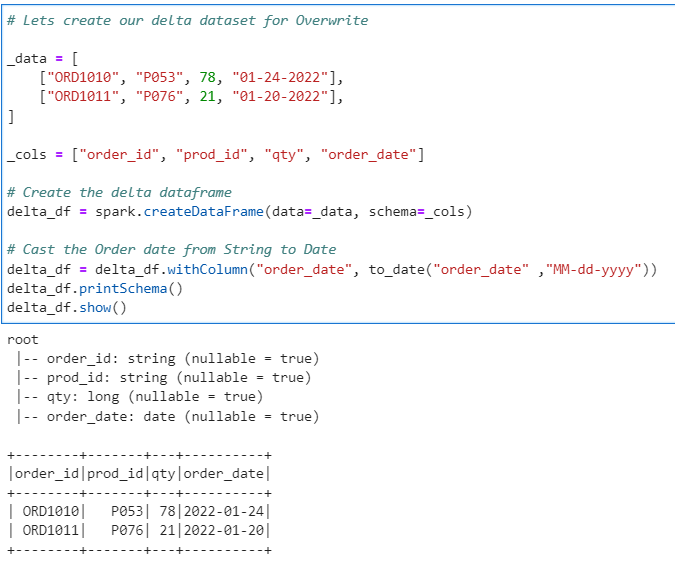

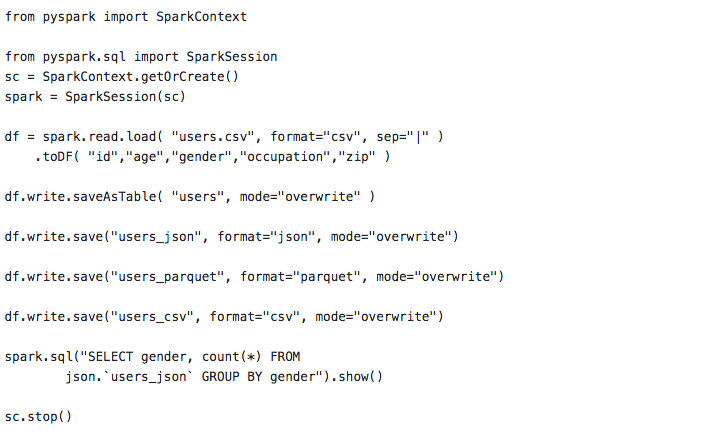

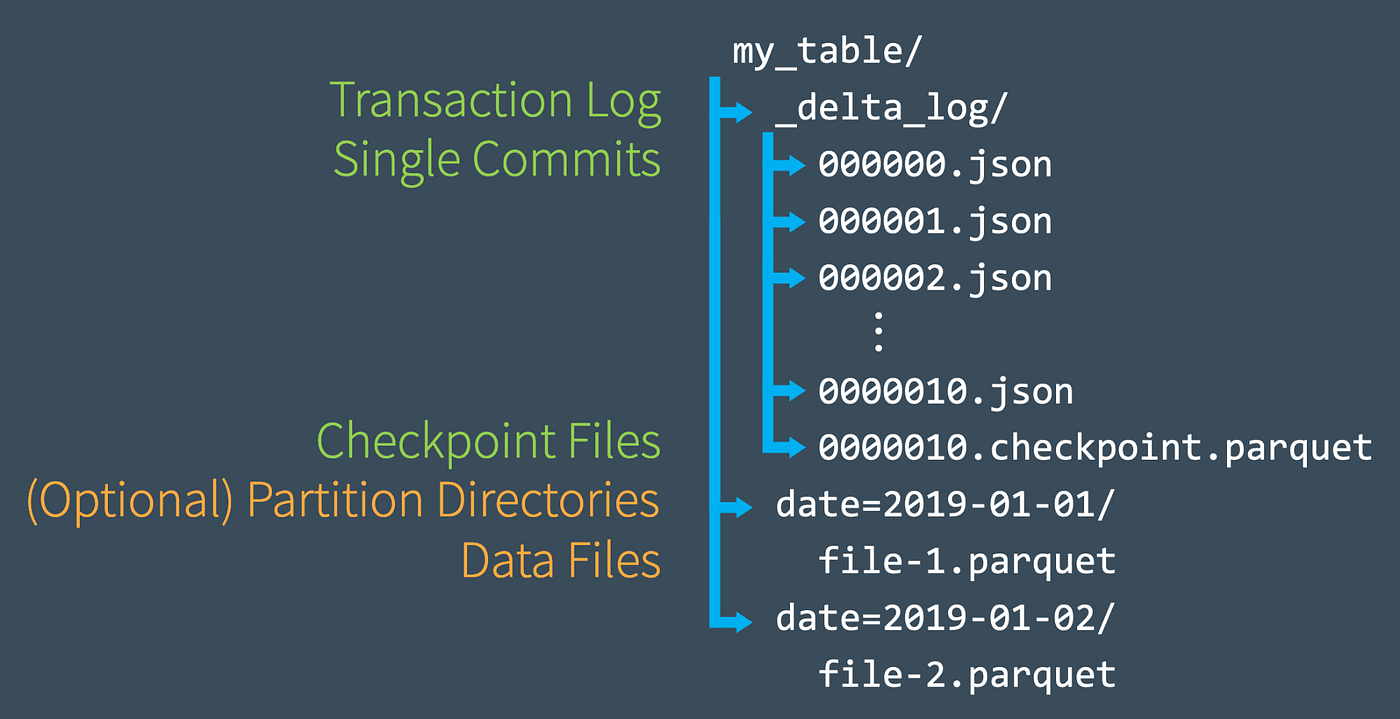

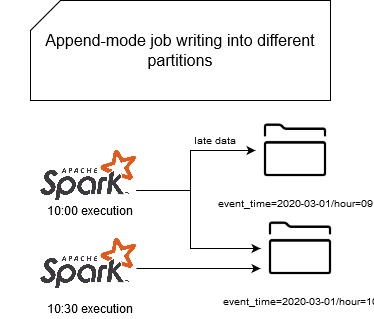

Why PySpark append and overwrite write operations are safer in Delta Lake than Parquet tables | Delta Lake

How to handle writing dates before 1582-10-15 or timestamps before 1900-01-01T00:00:00Z into Parquet files